Synthetic Research & Silicon Sampling // BrXnd Dispatch vol. 010

On research, large language models, and a future of interviewing fake humans

Hi everyone. Welcome to the BrXnd Dispatch: your bi-weekly dose of ideas at the intersection of brands and AI. I hope you enjoy it and, as always, send good stuff my way. NYC spring 2023 Brand X AI conference planning is in full effect (targeting mid-May🤞🤞). If you are interested in speaking or sponsoring, please be in touch.

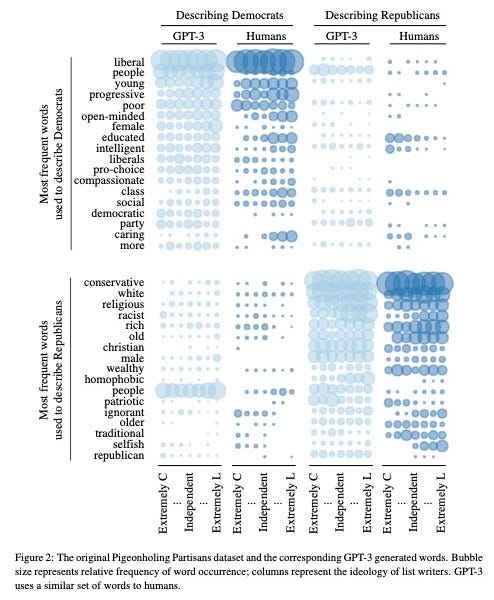

A few weeks ago, I heard the term “silicon sampling” for the first time. As Paul Aaron laid out in an excellent piece for the Addition newsletter, the basic idea is that GPT3 and similar large language models can simulate the opinions of real people for polling and research purposes. The September 2022 paper “Out of One, Many: Using Language Models to Simulate Human Samples” lays out evidence that the biases of these models can actually be utilized as a feature to generate synthetic citizens for research purposes.

The paper’s authors refer to this capability as “algorithmic fidelity”—easier understood as the ability to induce the model to produce answers that correlate with the opinions of specific groups:

Our conception of algorithmic fidelity goes beyond prior observations that language models reflect human-like biases present in the text corpora used to create them. Instead, it suggests that the high-level, human-like output of language models stems from human-like underlying concept associations. This means that given basic human demographic background information, the model exhibits underlying patterns between concepts, ideas, and attitudes that mirror those recorded from humans with matching backgrounds. To use terms common to social science research, algorithmic fidelity helps to establish the generalizability of language models, or the degree to which we can apply what we learn from language models to the world beyond those models.

They went on to test this fidelity across several criteria, including how well it could recreate survey results.

While this paper focuses mainly on political polling, there are fascinating implications for marketing and brand research (as Paul also points out in his article). Brands spend millions of dollars annually studying consumer perception, product opportunities, and advertising messaging. If a large language model could produce even a small amount of this research, it would save companies huge amounts of time and money.

A big thank you to LinkedIn, Redscout, and Otherward for sponsoring the upcoming BrXnd conference and my work. I have various sponsor levels available for the event if you would like to support us. Be in touch (or reply to this newsletter), and I’m happy to send over the details.

To learn more about the approach and its application, I reached out to Hugo Alves, the co-founder of the newly-launched company Synthetic Users, which practices this “silicon sampling” approach for product research. The idea for Synthetic Users came to Alves when he heard about the Google engineer that claimed the company’s language model LaMDA was sentient. If these models were convincing enough to trick engineers, Alves thought, then surely they could be used for product research. When he came across a few papers on the topic, including the research cited above, he decided to focus his energy on commercializing the approach. “You define your audience, you define what you feel the problems are, and you define your proposed solution,” said Alves, explaining how the Synthetic Users product works today. “Then the system generates up to 10 interviews each time you run it. It also generates a report from those interviews. So essentially, it’s an easy, almost real-time, way to get feedback on your ideas and propositions.”

It’s in its early days, but the plan is to continue to simplify the product, requiring less direct knowledge of the solution. “In the future,” Alves explained, “we want this to be even richer, so you just define your audience like, ‘people in long-distance relationships,’ and you click a button, and it gives you a set of possible problems they have, and you can select from them instead of you having to go and put it in yourself.” I asked Hugo if he had plans to expand the target audience to marketers, and he said they’re still in the early stages of the product lifecycle but are leaving all avenues open.

One question all this raises for me is, “why?” If it is so easy to reproduce research from human panels, on the one hand, it makes tons of sense to do it cheaper and quicker using machine learning models. Still, on the other, it makes you wonder if that kind of research is actually worth anything in the first place. Anyone who has worked with large brands on research has seen all sorts of output—a ton of marketing research exists to ensure a marketing VP or agency has something to point to if everything goes wrong. Synthetic research seems like a perfect solution for this kind of study, where the goal is clearly not to gain deep insight into a target audience. When I posed this to Alves, who has a master’s in clinical psychology, he said, “One of the things I say to people who ask, ‘but does a language model have all the nuances and subtleties?’ And I’m like, ‘have you seen real research? Have you seen real user researchers doing it? And the feedback that sometimes you get it?’ Even with just our beta product, it’s comparable.”

That makes sense to me, at least in theory. I’m supposed to get access to the Synthetic Users product this week, and I am excited to have a play and share the results.

New BrXndscape Companies

New companies listed on BrXndscape, a landscape of marketing AI companies (writeup in case you missed it). If I missed anything, feel free to reply or add a company. (The companies are hand-picked, but the descriptions are AI-generated—part of an automated pipeline that grabs pricing, features, and use cases from each company’s website and one of many experiments I’ve got running at the moment.)

[Product Photography Generation] Pebblely: Pebblely helps you create beautiful product photos in seconds with the click of a button. It automatically removes the background from your images and creates photos with perfect lighting, reflections, and shadows. You can also resize and extend your images to any size, turning a single generated image into multiple marketing assets. Get 40 photos for free.

[Content Generation] Craftly.AI: Craftly provides users with 100+ expert-trained tools, continuous optimization, 25+ languages, live support, and long-form tools to help them create content quickly and easily

[Email Generation] SellScale: SellScale AI is a powerful AI-powered outreach platform that helps you generate, send, and tune outbound that converts. It integrates with common sales tools to automatically populate personalizations, and its AI learning capabilities help you optimize personalizations to the persona you’re reaching out to. It also adapts outbound to your brand, industry, and personas, so you can reach the right people with the right message.

Thanks for reading. If you want to continue the conversation, feel free to reply, comment, or join us on Discord. Also, please share this email with others you think would find it interesting.

— Noah

Hey Noah, I'm the cofounder of Pebblely and I just came across your article. Thank you for mentioning us! :)