The Gap // BRXND Dispatch vol 103

The distance between people using coding agents and everyone else has never been wider. Plus, new models, Anthropic’s Super Bowl play, and why AI makes you work more, not less.

You’re getting this email as a subscriber to the BRXND Dispatch, a newsletter at the intersection of marketing and AI. Forward it to a friend, they’d like that, and so would we.

James Wang, formerly at ARK and Nvidia and now at Cerebras (which has just about the fastest models on the planet), wrote something this week that stuck with me: “I’ve never felt a larger gap than between the ~1 million people using Codex/Claude and the rest of humanity.” I think he’s right. It’s been a busy two weeks—Bytedance released Seedance 2.0, Anthropic shipped Sonnet 4.6, and Google dropped Gemini 3.1 Pro, amongst other news—but the real story is that gap. The tools are changing what work looks like, but only for the people who use them.

This issue is a link roundup. Lots going on.

Model releases

It was a big couple of model weeks. Anthropic shipped Claude Sonnet 4.6. Like the new Opus, it has a 1-million-token context window option, which can get you a pretty long way. The Sonnet line has become the workhorse of the whole Claude family, and this release cements that.

Meanwhile, Google released Gemini 3.1 Pro, scoring 77.1% on ARC-AGI-2—more than double its predecessor (not that I ever pay any attention to these sorts of things: vibes > evals for life). Google is pushing the boundaries of its creativity, especially in animation.

OpenAI shipped gpt-realtime-1.5 for the Realtime API, with improved instruction-following and multilingual accuracy. Honestly, of all the releases, I’m probably most excited to dig in here. We really need a reliable voice model. There’s so much promise there, but I mostly find myself getting annoyed when it gets in a loop or fails to call tools and end up giving up.

ByteDance released Seedance 2.0, and it’s been described as Hollywood’s “Deepseek moment.” The model generates 1080p video with unified audio—rather than layering sound onto visuals after the fact, it’s trained on both simultaneously, which is the right way to do it and something no one else has pulled off at this quality level. Disney and Paramount have already sent cease-and-desist letters over copyright infringement. ByteDance said it’s “strengthening safeguards.” Seedance 2.0 isn’t officially available outside China yet, but it’s coming to CapCut—which means it’s coming to TikTok creators worldwide.

Coding agents are eating everything

I’ve been saying for a while that AI is a fuzzy interface—its core superpower is transforming data from one format to another. The coding agent wave is a specific case of that: transforming human intent into working software with less and less friction. It feels like we’re seeing real takeoff on this use case. (If you didn’t listen to my Bloomberg Odd Lots episode on Claude Code from earlier in the month, that’s a good place to start.)

Why is Claude Code so addictive? Game designer Aaron Rutledge believes part of the reason is that it has “game feel.”

The headline: Spotify says its best developers haven’t written a line of code since December. An internal system called “Honk,” built on Claude Code, lets engineers deploy fixes from their phones via Slack. I’ve been working on something similar for our team at Alephic, and it’s pretty amazing what you can do right now with coding agents like Claude Code running in sandboxes.

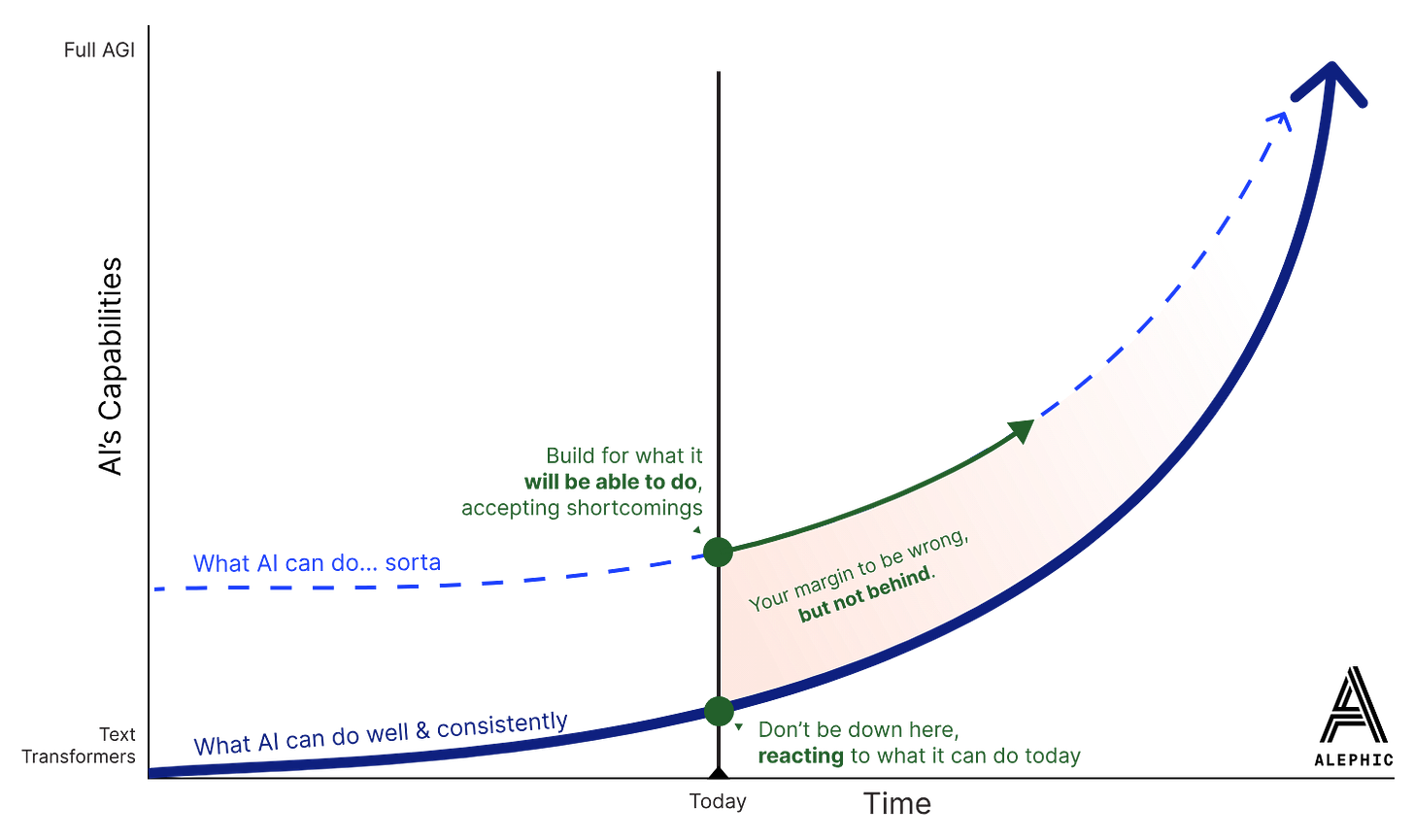

Boris Cherny, the head of Claude Code, sat down with Lenny Rachitsky and said some things worth paying attention to. Claude Code now powers 4% of public GitHub commits. He called coding “solved” and shared a whole bunch of useful ideas, including “Build for the model six months from now, not today.” Here’s a great post from last year by Charles Gallant, one of my colleagues, on the topic. He also made this helpful diagram:

Figma introduced Claude Code integration. You can now capture live UI from a browser—including localhost—and convert it into editable Figma frames. Design-to-code has existed for years. Code-to-design is new. My initial take on this was kinda 🤷 … once something is in code, I can’t really imagine why you’d want to bring it back to Figma, but maybe that’s a lack of imagination on my part.

A few more quick ones from the coding agent world: Replit launched Animation (”vibecode your next viral video in minutes,” powered by Gemini 3.1 Pro). Airtable launched Hyperagent, giving every agent session its own isolated cloud computing environment. And Karpathy distilled GPT into 243 lines of pure Python—no dependencies, just the full algorithmic content. Everything else, he says, is for efficiency.

Paul Ford wrote a piece in the NYT describing what he calls a “November moment” for software. Tools like Claude Code made it trivially fast to ship side projects and revive old ideas.

The plumbing got good

If the coding agents are the visible part of this shift, the infrastructure underneath them is what makes it real. This was a standout two weeks for agent plumbing.

Cloudflare introduced Code Mode for their MCP server, and the numbers are wild: they fit an entire 2,500+ endpoint API schema into ~1,000 tokens, down from 1.17 million. That’s a 99.9% reduction in context usage for agents making tool calls. They plan to open this approach to other MCP servers through their portal. If MCP is going to scale, this kind of optimization is how.

Simon Willison started “Agentic Engineering Patterns”—a living document of best practices for working with AI coding agents. Willison has been one of the best writers about the practical side of AI development, and this is worth bookmarking.

OpenAI published the Codex app-server protocol—a bidirectional JSON-RPC 2.0 interface that lets you embed Codex directly into your own products. Auth, conversation history, approval workflows, and streamed events.

Firecrawl shipped Browser Sandbox—managed secure environments where agents interact with the web. Kimi introduced Agent Swarm: 100 sub-agents running in parallel, 4.5x faster than single-agent approaches, and they avoid groupthink by having agents disagree with each other. (They also made it super easy to get your own OpenClaw running.)

AI and work

This section has the most tension in it, I think.

HBR published an eight-month study that found AI doesn’t reduce work—it intensifies it. Employees worked faster, expanded their task scope, and worked longer hours, even though nobody asked them to. The productivity surge feels great at first, but the researchers argue it creates workload creep, cognitive fatigue, and weakened decision-making over time.

Meanwhile, the efficiency benchmarks keep moving. SaaStr reports that $500K ARR per employee is the new baseline—up from $200K. AI-native companies like Cursor and Midjourney are hitting $3–5 million per head.

KPMG is forcing its auditors to accept lower fees because AI can now automate accounting—effectively announcing to the world that its core service is being commoditized.

The FT reported that consulting firms are resorting to “carrot and stick” approaches with senior staff who are less willing to use AI than their junior colleagues. Which is to say: the adoption problem inside large organizations isn’t technical, it’s cultural.

One more for the marketers: Airbnb’s CEO said that traffic from AI chatbots converts at a higher rate than traffic from Google.

And keeping it all in perspective, Ethan Mollick reminded everyone that “People on [X] systematically overestimate the speed at which companies can deeply adopt AI & underestimate the impact of AI’s jagged abilities in limiting AI’s utility in the short run. Work will certainly start to change but companies have a lot of inertia & change slower.”

Business of AI

Anthropic’s Super Bowl ads worked. The campaign—”Ads are coming to AI. But not to Claude”—delivered an 11% jump in daily active users and pushed Claude into the App Store top 10. Altman called the ads “deceptive” and “clearly dishonest.” Then they refused to cuddle at an Indian AI event.

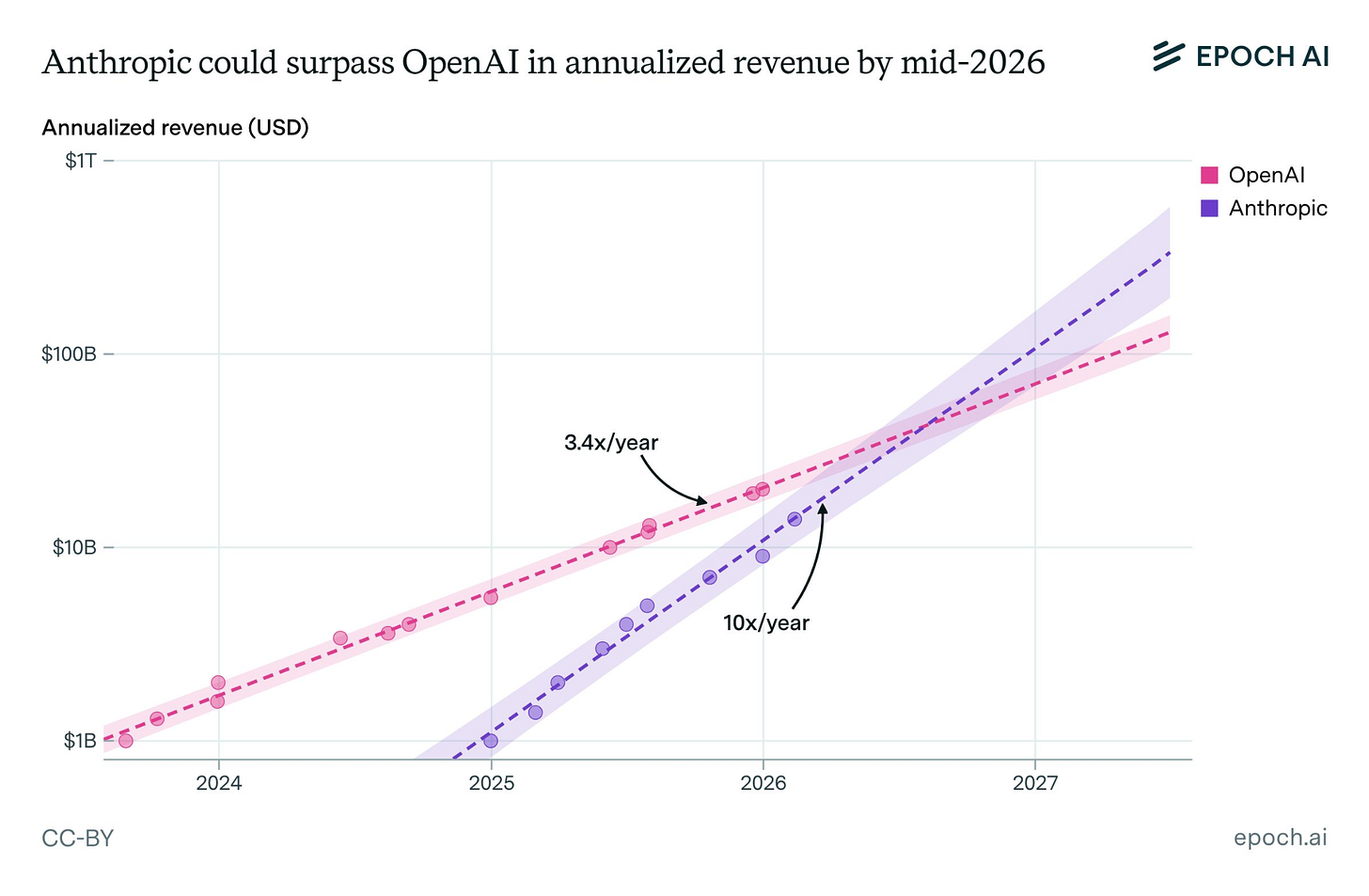

Epoch AI data shows Anthropic growing revenue 10x per year since hitting $1B in annualized revenue, versus OpenAI’s 3.4x. If those trajectories hold, Anthropic could overtake OpenAI by mid-2026.

On the OpenAI side, they announced a “Frontier Alliance” with Accenture, BCG, Capgemini, and McKinsey. With all the enterprise attention on Anthropic/Claude Code, this makes sense. OpenAI is going the enterprise distribution route through the big consultancies. Whether those consultancies are training their own replacements is a question left as an exercise for the reader.

Ideas worth reading slowly

Simon Willison wrote about “cognitive debt”. The idea: when developers use AI to generate code they don’t understand, they lose their mental model of the system. Over time, they can’t reason about their own projects. I have lots more thoughts about this that I’m trying to pull together.

Antirez (the creator of Redis) posted something that pairs well with the cognitive debt idea: “Software is created for accumulation of knowledge. AI is not going to cancel this fact. Forget the idea that programs will be prompts (specifications). The details is what really matters, and they are harder to capture textually than in the code. New projects will be spec + code, evolving.”

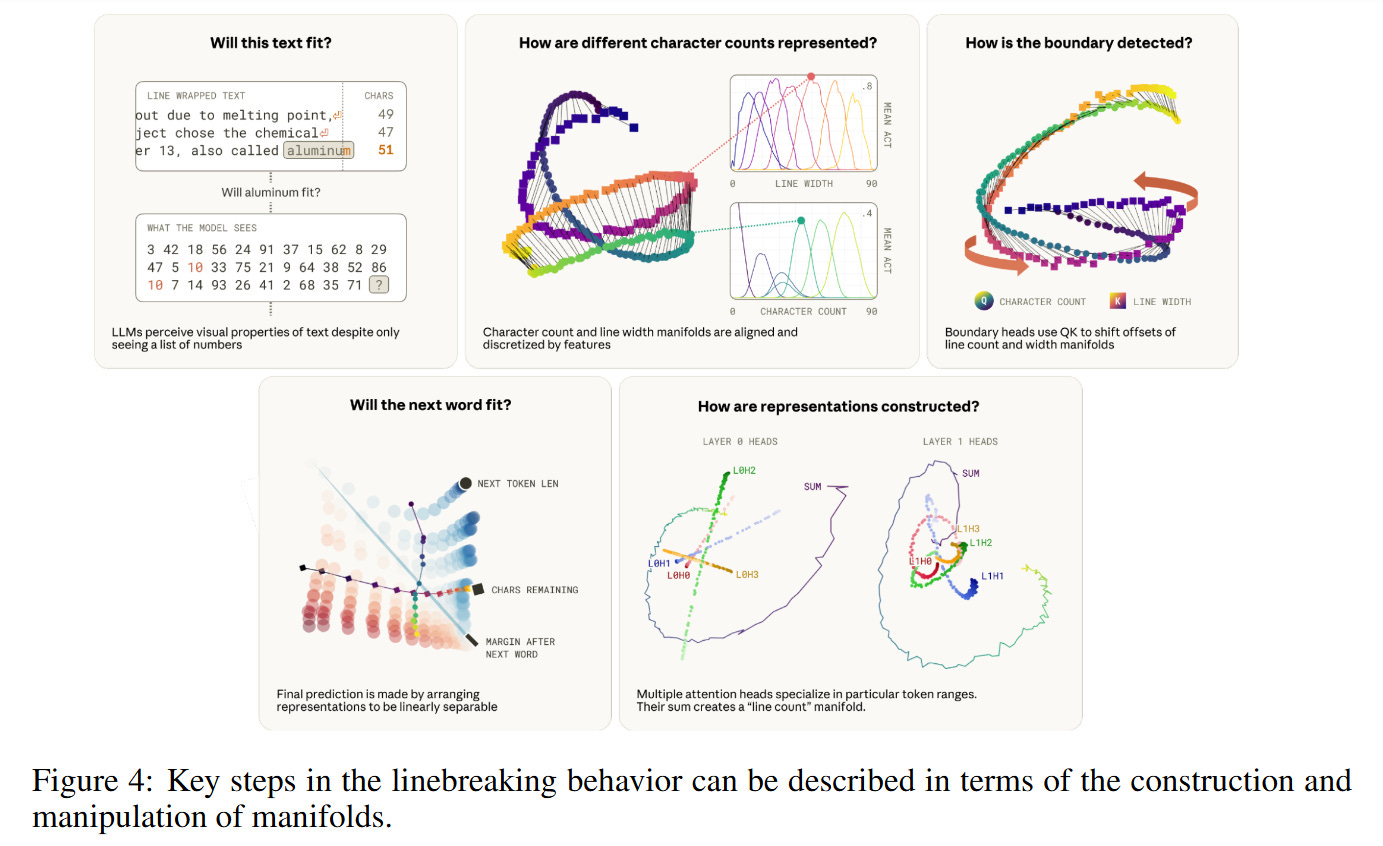

On the more technical side: Anthropic researchers reverse-engineered how Claude performs character counting and found it doesn’t use anything like integer registers. Instead, it encodes counts as a spiraling “character count manifold” in the residual stream via geometric rotations across attention heads. Transformers solve discrete counting through continuous geometry. 🤯

Quick hits

MakeUGC.ai generates AI-powered UGC videos in about two minutes—write a script, pick actors, done.

Designer Chad Pugh shared a workflow for AI-assisted logo components—one of the more practical design-with-AI walkthroughs I’ve seen.

Anthropic launched Claude Code security scanning: it reads your codebase, finds vulnerabilities, validates the findings, and suggests patches.

Dario Amodei sat down with Ross Douthat: “We don’t know if the models are conscious.” The full interview is worth your time. He also sat with Dwarkesh Patel, which is always a favorite. I thought Dwarkesh asked some tough questions about the general direction of LLMs that Dario didn’t have great answers to.

Transformer News argues that the left is ceding the AI debate to the right, refusing to engage seriously with a technology that is both a threat and an opportunity.

If you have any questions, please be in touch. As always, thanks for reading.

— Noah