One Year Later // BrXnd Dispatch vol. 32

Catching up with 12 months of AI-ing.

You’re getting this email as a subscriber to the BrXnd Dispatch, a (roughly) weekly email at the intersection of brands and AI. I am planning some corporate AI events as well as my own conference in NYC for the Spring (I’m not sure I’m going to pull off SF). Re: my NYC event for the spring - I am starting to look for speakers and sponsors, so if you think you fit into one of those buckets, please be in touch.

Saturday marks a year of this newsletter (and, I guess, a year of what I’ve been up to with BrXnd). Here’s what I wrote in the first edition:

Generally, I’ve become fascinated by what AI has to offer, but I think my interests are different than many others. While the focus is mostly on the text and visual generation, both of which are amazing, I have become pretty convinced that there’s some deep understanding of brands and marketing in these systems that I’m working on unearthing. Basically, I think that because branding is so much about generating patterns that lodge themselves in people’s brains, these systems are particularly well-suited partners in the process. And while much of the focus is rightly on the output that comes from that, I am equally interested in how to extract that perception and be able to use it as an input in the creative process.

I feel good about that! That still feels right to me. I’m still pretty disappointed by the state of the conversation happening around this stuff. Another surprising thing to me over the last twelve months is how slow the uptake has been in the enterprise. That sounds a bit crazy, given all the attention and chatter, but companies, especially large ones, are still struggling to figure out where to start with this stuff.

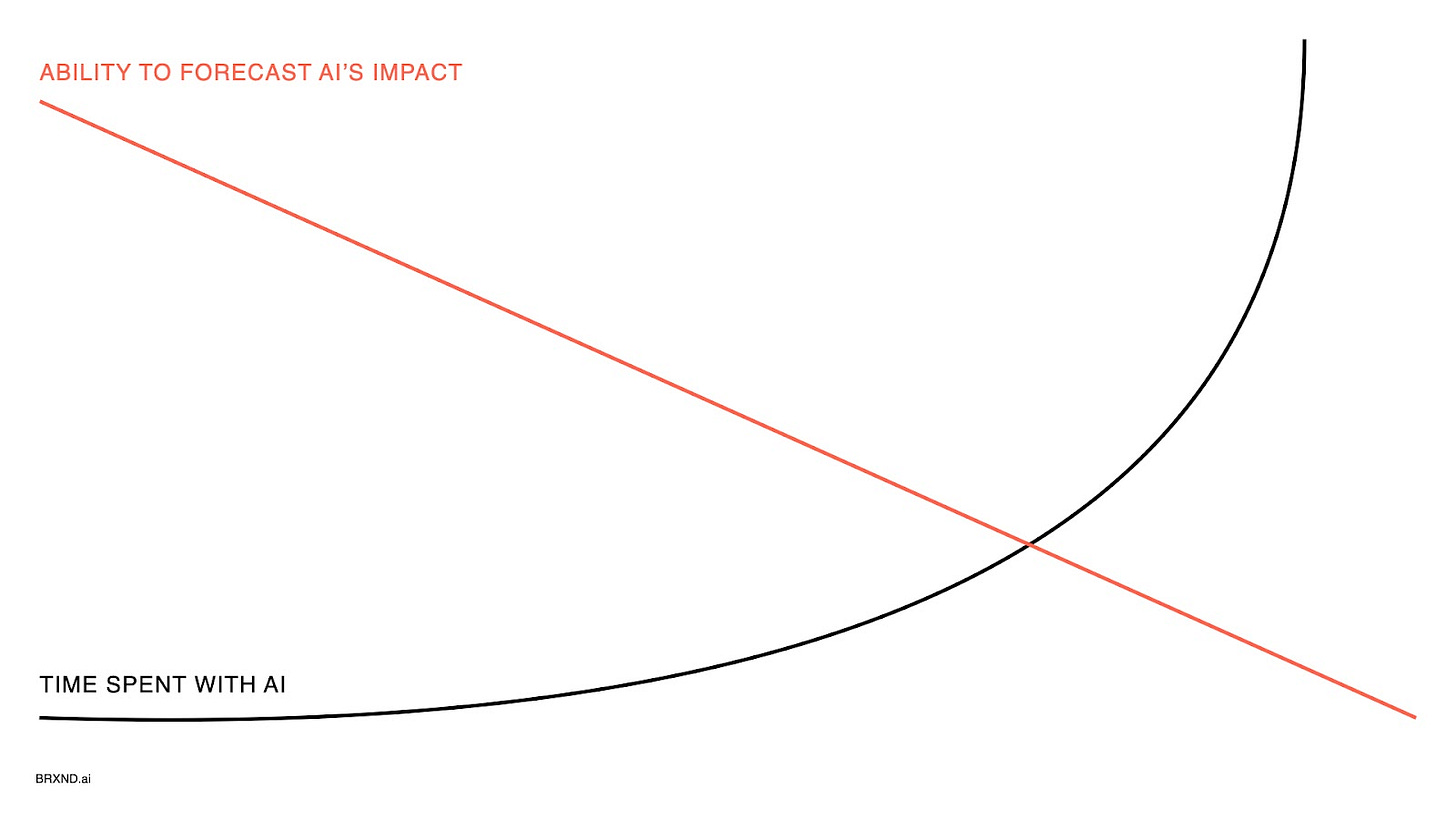

As far as I can tell, a big part of that is thanks to the legal questions still surrounding AI. But, at least in the conversations I’m having, another big component is just not knowing where to start. I get that! Here’s a slide from a presentation I’ve been giving lately:

I legitimately feel this way. The more time I spend with this technology, the less sure I feel I have anything to add about where it’s all headed. And I’m not alone. Here’s a great tweet from Miles Brundage, who does policy research at OpenAI:

I actually tried to find the original source of the philosophy quote, and the best I could come up with was this quote from a CS Lewis sermon titled “Learning in Wartime,” which also seems quite apt:

Good philosophy must exist, if for no other reason, because bad philosophy needs to be answered. The cool intellect must work not only against cool intellect on the other side, but against the muddy heathen mysticisms which deny intellect altogether. Most of all, perhaps, we need intimate knowledge of the past. Not that the past has any magic about it, but because we cannot study the future, and yet need something to set against the present, to remind us that the basic assumptions have been quite different in different periods and that much which seems certain to the uneducated is merely temporary fashion. A man who has lived in many places is not likely to be deceived by the local errors of his native village: the scholar has lived in many times and is therefore in some degree immune from the great cataract of nonsense that pours from the press and the microphone of his own age.

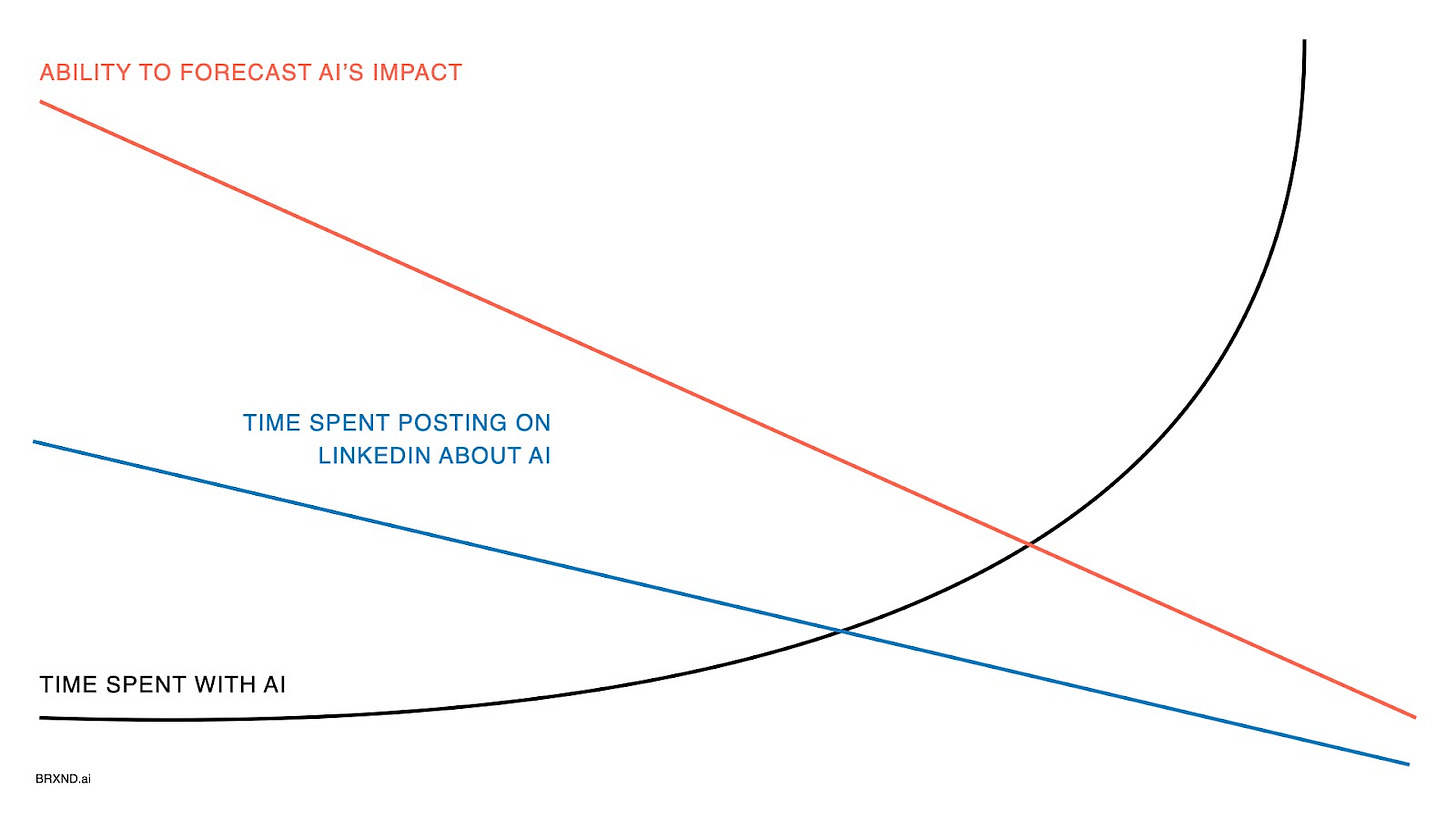

To that end, I’ve been thinking about updating my chart:

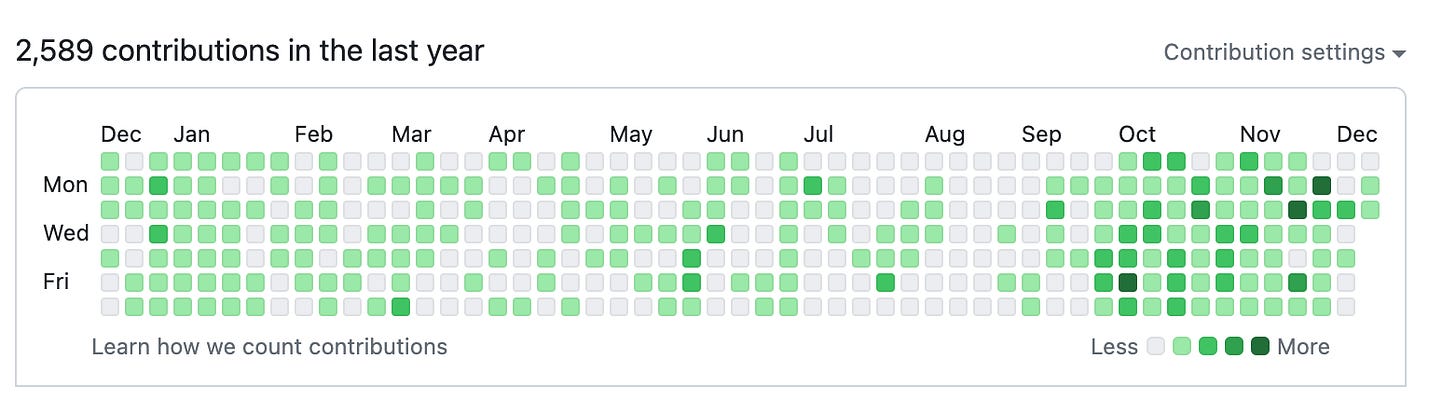

Of course, the other correlation here is my Github contributions, which basically tracks how much time I spend writing code (which is a good proxy for how much time I spend with AI).

That’s all nice and hopefully interesting, but the question remains: what do you do in a situation like this one?

My advice has been to play, experiment, and try to build up your AI intuition. But I also really liked this build on the point from Paul Graham last week, particularly the second tweet: “In a situation like this, there are two general pieces of advice one can give: keep your options open, and pay close attention to what's happening. Then if you can't predict the changes AI will cause, you'll at least be able to react quickly to them.” That’s a more direct way to say what I’ve been trying to get at: when you know something is important but you aren’t sure quite how, the best thing you can do is spend as much time with it as possible so you are ready for what comes next.

With all that said, I got asked recently for a few words of “practical advice” that would help a group of folks get themselves started with this stuff,2 and here’s how I replied:

Get a ChatGPT Pro account (they’re open for signups again!). It's $20 a month and well worth it. The quality of response from GPT 4 vs the free 3.5 is huge, and the access to both the web browser and advanced data analysis (ability to upload Excels, etc., and have it run Python to analyze) is amazing.

Just play. I know I'm a broken record on this, but get a feel for what it's good and not good at.

Find some go-to use cases: Here are a few of my favorite basic use cases (remember that you can't guarantee your prompts won't be used as part of training, so don't upload anything confidential):

Content Summarization/Understanding: Ask ChatGPT to summarize something long, or, even better, if there's something you don't totally understand, like a scientific paper, upload it and ask questions to get it to help you understand.

Excel Functions: even if you don't write code, have ChatGPT write Excel functions for you.

Learning: When I'm learning about something new that's complicated (like how transformers work in machine learning), I have it start by explaining it to me like an eighth grader, then a 10th grader, 12th grader, and so on.

Clarity: I often throw an email or proposal into ChatGPT with a specific request to help me in any areas where things aren't clear enough.

Play with lots of models. I highly suggest clicking around replicate.com and just seeing what's there.

Stop listening to everyone on LinkedIn talking about how things work/what will happen/this magic prompt. It's largely nonsense. The people doing the most talking are doing the least. If you really want to go a level deeper with these things, check out more technical documents like this one for Lilian Weng or this one from LangChain (if you don't understand it, use ChatGPT to help).

I hope some of this is helpful and interesting. If you are doing interesting stuff with AI, please get in touch as I’m starting to plan my next conference (also, I just like hearing about this stuff). I’ll have more new toys to play with soon. Thanks again for sticking around with me and for all the great feedback along the way. There’s a chance I’ll be back again for another edition this year, but I might not. So, in that case, I hope everyone has a great holiday, and cheers to an excellent 2024.

As always, feel free to be in touch if there’s anything you want to talk about or I can help with.

— Noah

This rings so true for me, great post!