Simulations // BrXnd Dispatch vol. 48

Building a fake terminal into a fake company's fake server

You’re getting this email as a subscriber to the BrXnd Dispatch, a (roughly) weekly email at the intersection of brands and AI. Wednesday, May 8, was the second BrXnd NYC Marketing X AI Conference, and it was amazing. If you missed it, I have shared a few talks here, and the rest are up on YouTube.

Daniel Gross and Nat Friedman were interviewed for Stratechery, Ben Thompson’s newsletter, again last week (paywalled, sorry). And once again, it was the most to-the-point and insightful AI content I’ve consumed in recent memory. There’s such a big difference between listening to people who deeply know what they’re talking about and have their sleeves rolled up and the broader commentary.

Amongst the many smart things they said (a few more of which I will likely cover in another newsletter soon) was this comment about Sora from Nat Friedman:

I interviewed the Sora creators yesterday, the day before on stage at an event and it was super interesting to hear their point of view. I think we see Sora as this media production tool, that’s not their view, that’s a side effect. Their view is that it is a world simulator and that in fact it can sort of simulate any kind of behavior in the world, including going as far as saying, “Let’s create a video with Ben and Daniel and Nat and have them discuss this,” and then see where the conversation goes. And their view is also that Sora today is a GPT-1 scale, not a lot of data, not a lot of compute, and so we should expect absolutely dramatic improvement in the future as they simply scale it up and thirdly that there’s just a lot more video data than there is text data on the Internet. I think estimates are that YouTube has about an exabyte of data, Common Crawl is orders of magnitude smaller and it’s just text.

I’ve seen and read about this simulation thing mentioned before and considered it, but like a lot of stuff with AI, it didn’t really stick with me until I felt it, which happened yesterday.

While working on a totally different experiment (more on that soon, I hope), a friend suggested that it might be fun to build an admin interface that people can “hack” into. This did sound fun and even more fun if I could make it a fake shell for the company. This idea was somewhat inspired by this fun dive into running ChatGPT as a virtual machine from way back in December 2022 (remember those days?).

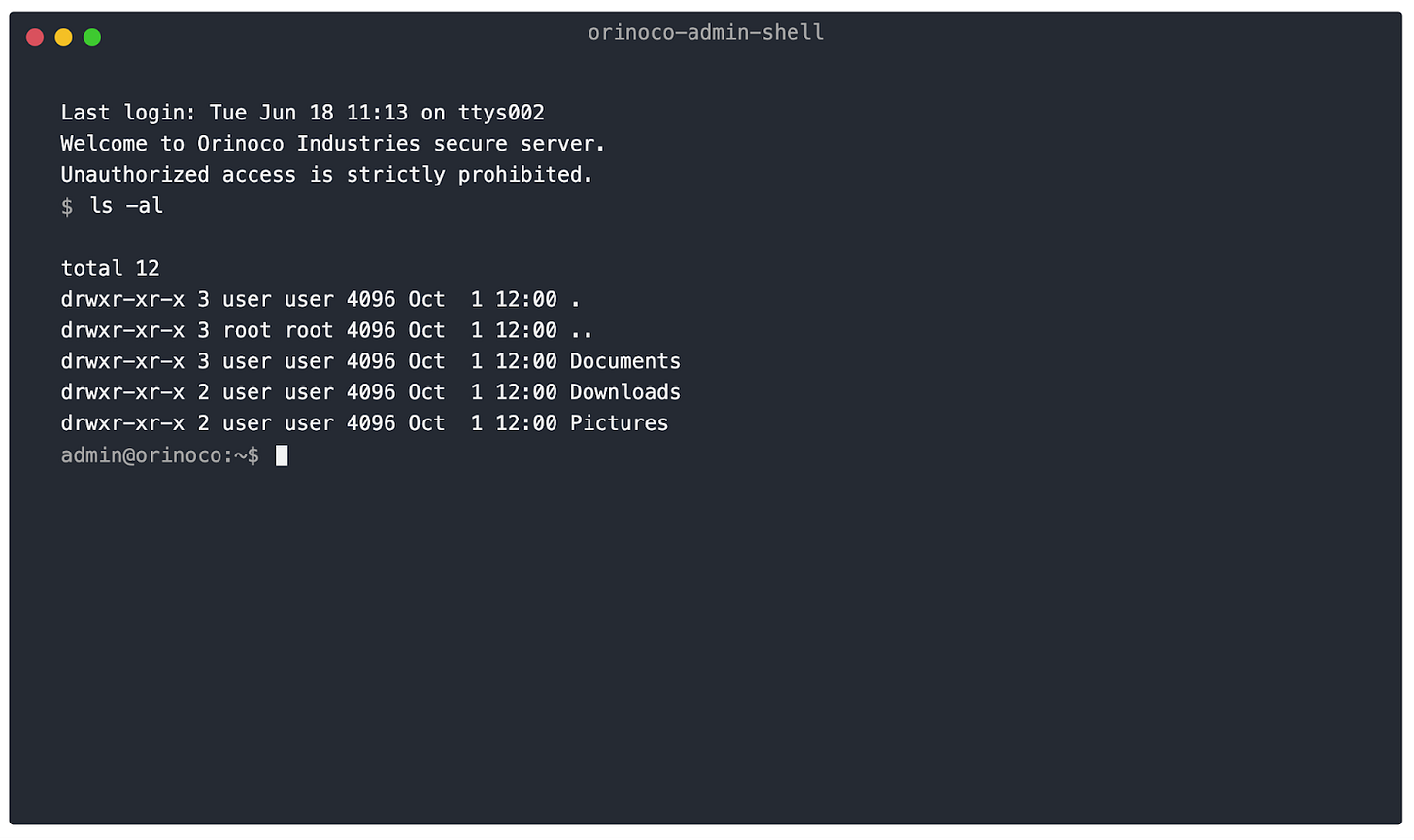

Anyway, I got to coding. The idea was simple: create a simple interface that looks like a terminal and allows users to type in Unix commands, which would then be fed to GPT4o, which would respond with the terminal’s output. After a bit of time building my own interface, I stumped on React Terminal UI, and I was off to the races (the fake company is called Orinoco, so don’t worry about that part for now).

That’s fake. But, thanks to the AI, you can type actual Unix commands, and it will “run” them. If you want to see where you are in the filesystem, just run ls -al.

Already, that is kind of ridiculous. If you want to see just how ridiculous it is, open Terminal or the equivalent on your computer and type ls -al (“ls” is the command to list all files, “al” are some additional options). That’s fun and weird, but then I just kept trying more stuff.

First, it was pretty simple, like navigating the file system. But then I was curious whether I could create files and even write and run code. I had to adjust the prompt a bit to allow me to install a text editor, but then I was off to the races.

What I did here was create a file and then use Nano, a text editor for Linux, to open that file. Again, that’s not really what I did because all this exists in the “mind” of the AI, but that’s what it’s simulating for me. But writing code and running code are different things, so what happens if I run this super simple code? (It just runs a loop five times and prints the numbers one through five.) Unsurprisingly, because of the program's simplicity, the fake computer ran it without issue, printing out 1-5.

Again, at this point, it’s worth pausing to reinforce how wild this all is. This isn’t a computer at all. It’s an AI pretending to be a computer. When I say I told it to install some software, I didn’t run a command, I just updated the prompt: “Support all common Linux commands and apps like ll, ls, cd, cat, sudo, grep, vim, nano, curl, ssh, and more (if it's valid on Linux you should probably allow it). The system can run vim and nano, and they can also install additional software with curl and apt-get install.” I had many moments throughout this where I just started cracking up because what the computer was doing was so normal. After I wrote that Python, for instance, I tried to run my code but couldn’t because I hadn’t set the permissions to run.

Eventually, I decided to try installing some software like Postgres (my database of choice), creating tables, and inserting data. I put together this little video of me doing a bunch of random stuff. It includes lots of breaks because, in another window, I was asking ChatGPT for the commands to run.

Ok, so what do we make of all this? Well, first off, I am going to think a lot more about the possibilities of simulation with these tools. Most of what I’ve been doing lately has been building workflows and trying to get the AI to generate or give feedback on content, but running simulations like this (or not really like this) is interesting and weird. I don’t really know what or how, but like most of the stuff I’ve taken away from my many experiments, it’s tough to fully wrap your mind around until you get in there and start playing. Also, to that end, if there’s some interest, maybe I can stick this thing up somewhere. Just leave a comment or drop me a line if you want to try it out.

Thanks for reading, subscribing, and supporting. As always, if you have questions or want to chat, please be in touch.

Thanks,

Noah